In my last post, I argued that the key to the success – and perhaps even the survival – of the biopharma industry is to excel at risk management. We are in an inherently risky business, where there are thousands of ways to fail, some of them breathtakingly expensive. But we need to take risks, occasionally some truly audacious ones, to create the breakthrough therapies that make a real difference in patients’ lives. So we had better be good at it.

But how?

The hypothesis as the root of risk

Those of you who know me in real life are probably sick of hearing me wax lyrical on the primacy of the hypothesis. I know, I’m sorry. But the hypothesis is one of the greatest intellectual creations of our species. It helps us make sense of a complex and ever-changing universe and it enables us to learn.

The hypothesis is pivotal at several stages in drug discovery and development.

- Hypothesis generation. This is where much of the magic happens in our business and, despite the dramatic evolution of our toolkit, it remains a profoundly human activity. Hypothesis generation requires a synaptic spark, an intuitive leap, a clarifying burst of pattern recognition that distills otherwise unstructured data into an idea. The “a-ha” moment. Hypothesis generation cannot be routinized or industrialized. It’s the one piece of our enterprise that is still best performed by individual scientists. It’s the critical capability we stand to lose as the industry hemorrhages scientists and governments starve academic science. But that’s a topic for another post.

- Hypothesis formulation. Pay attention now, because this is where the rubber hits the road – where risk is defined. Hypotheses are about what we believe, not what we know. Testing hypotheses converts belief to knowledge. As belief is turned into knowledge, risk is discharged. Therefore, to be most useful, hypotheses need to be as precise as possible about what you believe to be true – what needs to be true for a drug to succeed. As I outline below, a drug development program typically has multiple hypotheses, each associated with its own unique risk. Thus, another critical step here is allocating the risk across the different hypotheses. Is the risk largely technical – that is, if we can make it work, are we confident it will be a breakthrough drug? Or is there a significant component of market risk?

- Hypothesis testing. We all understand hypothesis testing – we learned about it in high school science lab. Honestly, it’s the simplest part of the whole process. If you’ve got a well-formulated, highly precise hypothesis, the critical experiment should essentially design itself. Yet, in our business, we typically spend most of our energy debating, designing and redesigning animal studies and clinical trials, and surprisingly little on debating and refining our underlying hypotheses. This, I think, is where we often go wrong, wasting precious resources on studies that don’t answer the critical questions and take real risks off the table.

The hypothesis triad

Most drug discovery programs require three different hypotheses.

- Biological hypothesis. What buttons do we believe this molecule pushes in target cells and what happens when these buttons are pushed? What biological pathways respond?

- Clinical hypothesis. When these pathways are impacted, why do we believe it will move the needle on parameters that matter to patients and physicians? How will this intervention normalize physiology or reverse pathology?

- Commercial hypothesis. If the first two hypotheses are correct, why do we believe anyone will care? Why will patients, physicians, and payers want this drug? How do we expect it to stand out from the crowd?

These are the questions that any clinical development program needs to answer along the way. And each clinical study needs to test one of these hypotheses. As I discussed last time, our key insight in the development of STX-100 was recognizing that testing the biological and clinical hypotheses required different experiments. We could test the biological hypothesis – that STX-100 treatment inhibits TGF? signaling activity in the lung – in a relatively inexpensive but robust fashion. Testing the clinical hypothesis – that inhibition of TGF? activity in the lung slows fibrosis and progressive loss of lung function – is a much more significant undertaking and a very different experiment. But it’s an investment of considerably lower risk once we know the results of the first study. And it’s an investment we don’t have to make at all if the biological hypothesis does not hold.

Absolute risk vs. distribution of risk

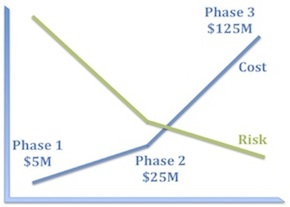

I’m sure it’s obvious to all of you that a well-constructed clinical development program must systematically reduce risk, but let’s take a moment to consider what that means in practice.

The first corollary is that experiments that bear 100% of the risk should be as inexpensive as possible. Consequently, program teams should ask themselves what components of risk can we take off the table with the first $5–10M we spend on this program?

The second corollary is that the biggest checks you write should bear little residual risk. This is why good Phase 2 studies really matter. There should be little left in the way of technical risk by the time you get to Phase 3. Because Phase 3 failures really hurt and are the biggest drag on our productivity as an industry.

What may be less obvious is that the absolute risk of a program is less important than the distribution of risk.

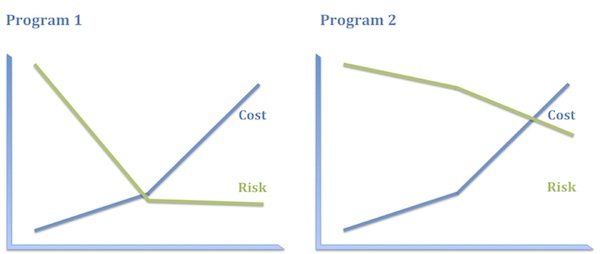

Consider, for example, the following two programs:

- Program 1. Antisense oligonucleotide, administered intrathecally, to babies, to promote an alternative splice in a pseudogene to replenish a protein missing by mutation. (Yes, I mean this.)

- Program 2. A small-molecule inhibitor of an immunokinase for rheumatoid arthritis. (No specific example in mind, I swear.)

I think most people would say that Program 1 is the riskier program. But consider where the risks actually lie in these programs, how they are distributed, and how much it will cost to discharge them.

Program 1 has plenty of risk, to be sure, but it’s very specific technical risk and it is highly front-end-loaded, which is to say it is discharged early and relatively inexpensively. Once you know that ASO treatment replenishes the protein missing in these patients, you have a pretty high level of confidence in both the clinical and the commercial hypotheses. Consequently, even though this program has high absolute risk, that full risk is borne only by the first 10–20% of the investment. The bulk of the investment is at substantially reduced risk.

In contrast, the biological and clinical risk for Program 2 is low. We know how to make and deliver kinase inhibitors and we have a solid understanding of how immunokinases impact disease activity. What we don’t know as we start out – and probably won’t know until the end of Phase 3, if then – is whether this compound will be sufficiently differentiated from the competition in RA to merit approval and reimbursement. In other words, the vast majority of the risk in Program 2 is commercial and that risk is discharged very late and at great expense. Consequently, the entire investment bears the bulk of the risk.

Imagine you were head of R&D and you had $300M to spend. You could either take ten shots like Program 1 or one like Program 2. These options carry essentially the same risk profile on that $300M. Which pipeline is more appealing to you? I thought so.

So what have we learned?

Let’s recap.

It all starts with the hypothesis – how we generate them, formulate them, and test them. This is a deeply intellectual exercise that gets sadly short shrift in our business.

Hypotheses are intimately related to risk. They define what we believe to be true and therefore what we need to prove. Each of these beliefs is associated with the risk that it might be wrong. Confirmation of a hypothesis converts risk to knowledge.

The type and distribution of risk matter more than the absolute risk. We should never be discouraged from taking on significant technical risks if we can discharge them quickly and cheaply. In fact, that should be the basis of our whole enterprise.

Honestly, that’s all you need to know to run a successful R&D program, in my humble opinion. Why is that so hard?